Why Randomness is Operational Suicide

In consumer AI, randomness feels creative. In logistics, when warehouse AI does the same, inventory gets written off. A Vision-Language Model in a logistics environment serves a fundamentally different purpose: it validates physical conditions against the data held in enterprise systems.

Entropy = Cost.

Determinism = Margin.

The Full Execution Chain

- Receiving: Where digital truth begins

- Internal: Put-away, fulfillment, counts

- Dispatch: Where liability accelerates

- Boundary: Gate validation & governance

Every token a Vision-Language Model generates inside a warehouse can update WMS records, trigger replenishment, release shipments, or shift liability between parties. This isn't a capability overview. That is a risk statement. And the architectural decisions that control how those tokens are produced will determine whether your Physical AI operates as a strategic asset or an undetected source of compounding liability.

01- Receiving Execution: Where Digital Truth Begins

Receiving is the wedge. Data corruption at the point of entry contaminates everything downstream. Once a PO number, SKU, or quantity is incorrectly written into the WMS, no subsequent process can compensate. Every workflow that follows is operating on a false premise.

What the VLM Must Do:

- Extract PO number and ASN reference

- Parse SKU list and validate quantities

- Detect damage and flag occlusions

- Cross-verify against ERP in real time

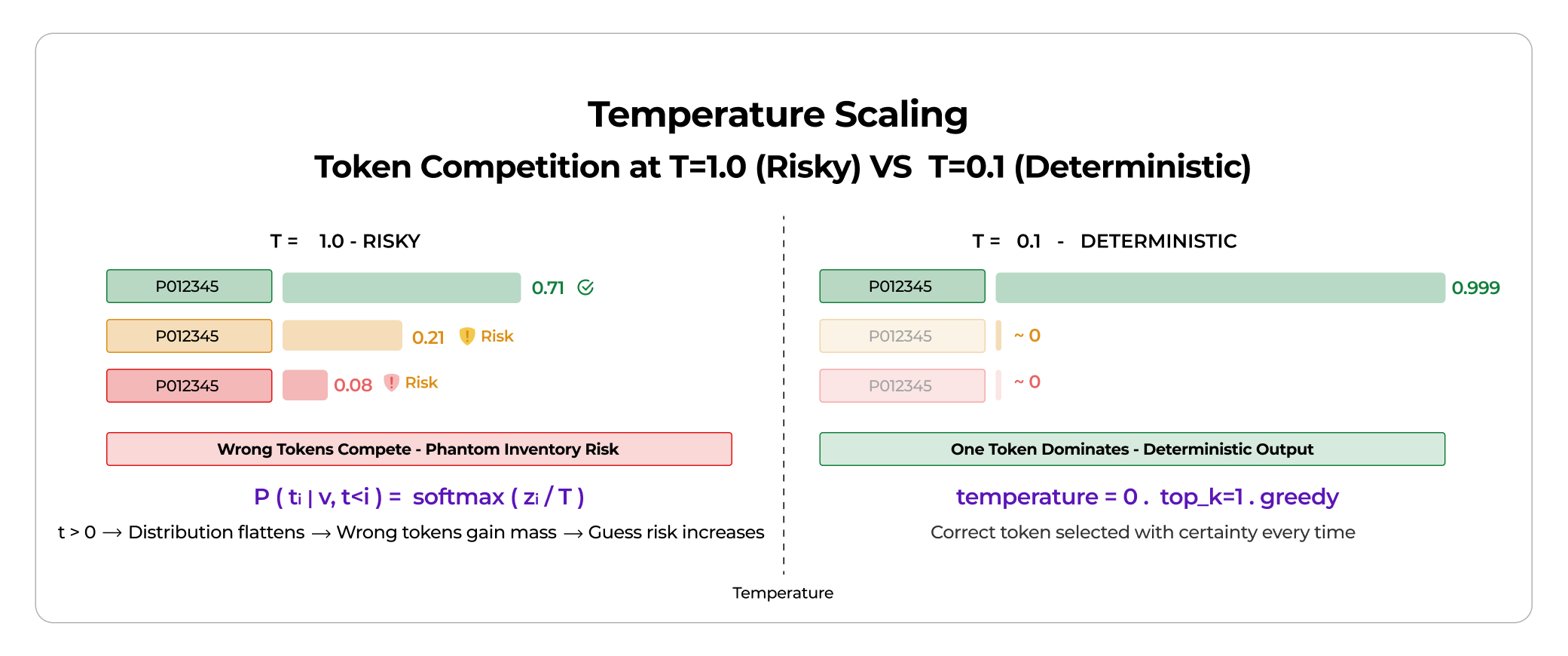

Token Prediction - Temperature Scaling

P(ti | v, t<i) = softmax(zi / T)

zi = raw logits, T = temperature. If T > 0, the distribution flattens, lower-probability tokens gain mass, and the guess risk increases.

At higher T, similar-looking SKUs compete for selection. This is the difference between a correct PO and a phantom inventory entry.

For example, at T=1.0, "PO12345" (correct) has probability 0.71, while "PO12845" has 0.21 and "PO12395" has 0.08. At T=0.1, the correct token dominates at 0.999, while alternatives collapse to near-zero values.

Deterministic Configuration:

temperature = 0, top_k = 1, top_p = 1, presence_penalty = 0, repetition_penalty = 1.0, output_format = JSON schema constrained, confidence_threshold > 0.85

The abstention principle: if visual_confidence < threshold, return "UNSURE". Do not guess.

"Receiving is not about generation. It is about verification. The AI's job is not to fill gaps. It is to know when to stop."

02- Internal Execution: Where Entropy Multiplies

Warehouse errors rarely present themselves as obvious failures. They degrade accuracy gradually. A single misplaced SKU doesn't trigger an alert. It will quietly fuel inventory drift until a cycle count reveals a gap that neither operations can explain nor finance can resolve.

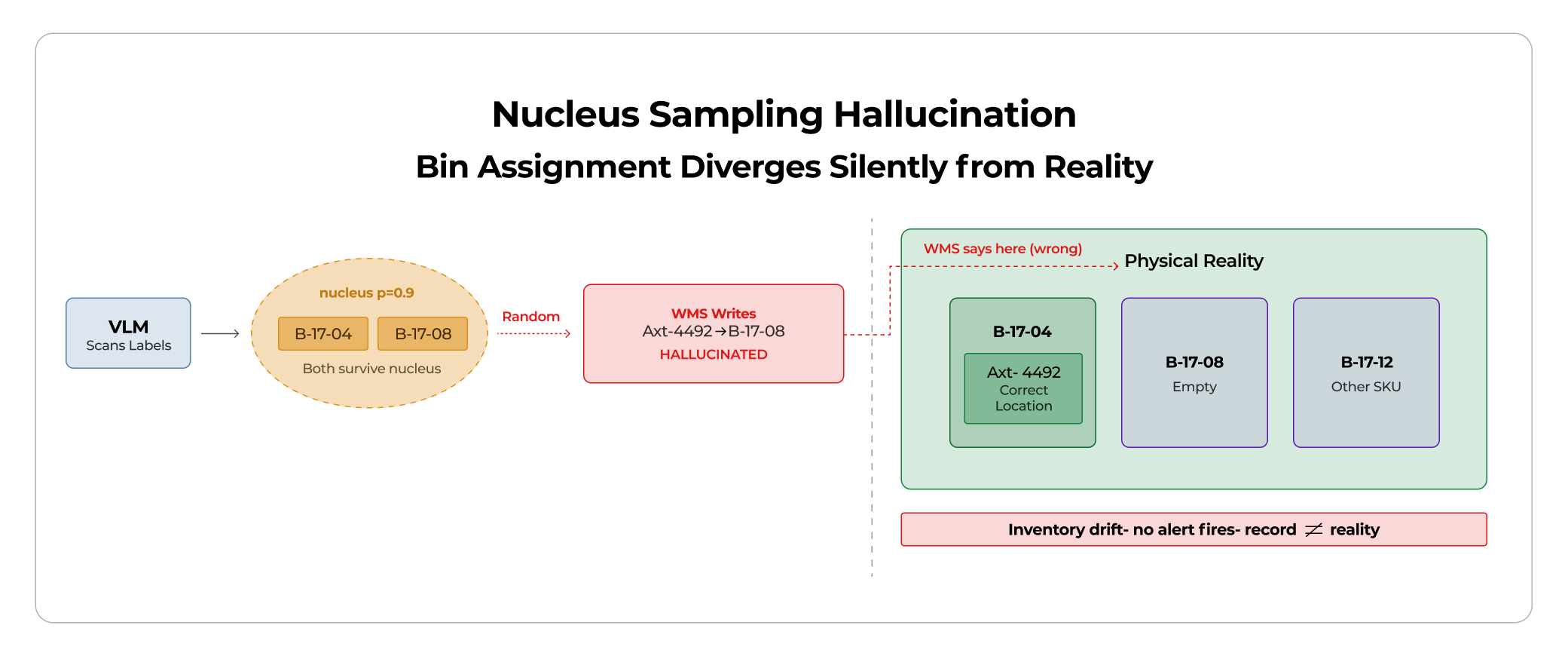

Nucleus Sampling — Why Multiple Candidates Survive:

With p = 0.9, multiple bin candidates survive the nucleus. Random sampling then chooses one. The result is a committed WMS update based on chance.

Example: The WMS writes Inventory[Axt-4492] = Bin B-17-08 (WRONG — hallucinated), while physical reality is Bin B-17-04 (CORRECT — now invisible). Inventory drift begins. No alert fires. No human sees it. The record and reality have silently diverged.

Scale Calculation — Cycle Count Accuracy Drop: 99.7% to 98.5%:

1.2% error rate = 6,000 mismatches on 500K SKUs = 600 labor audit events. Hallucination cost is not theoretical. It is a line item on your operations budget.

Beam Search vs Nucleus Sampling:

Nucleus Sampling: Stochastic. Different outputs on identical inputs. Unsuitable for production verification.

Beam Search: Deterministic. Globally optimized. Beam width = 3 improves stability without stochastic branching.

03- Dispatch Execution: Where Liability Accelerates

Dispatch establishes contractual truth. The instant a shipment is validated and released, the AI-generated record becomes the legally binding baseline against which all subsequent claims, disputes, and SLA obligations are measured. At the point of contractual commitment, statistical approximation is no longer a tolerable margin, it becomes a liability.

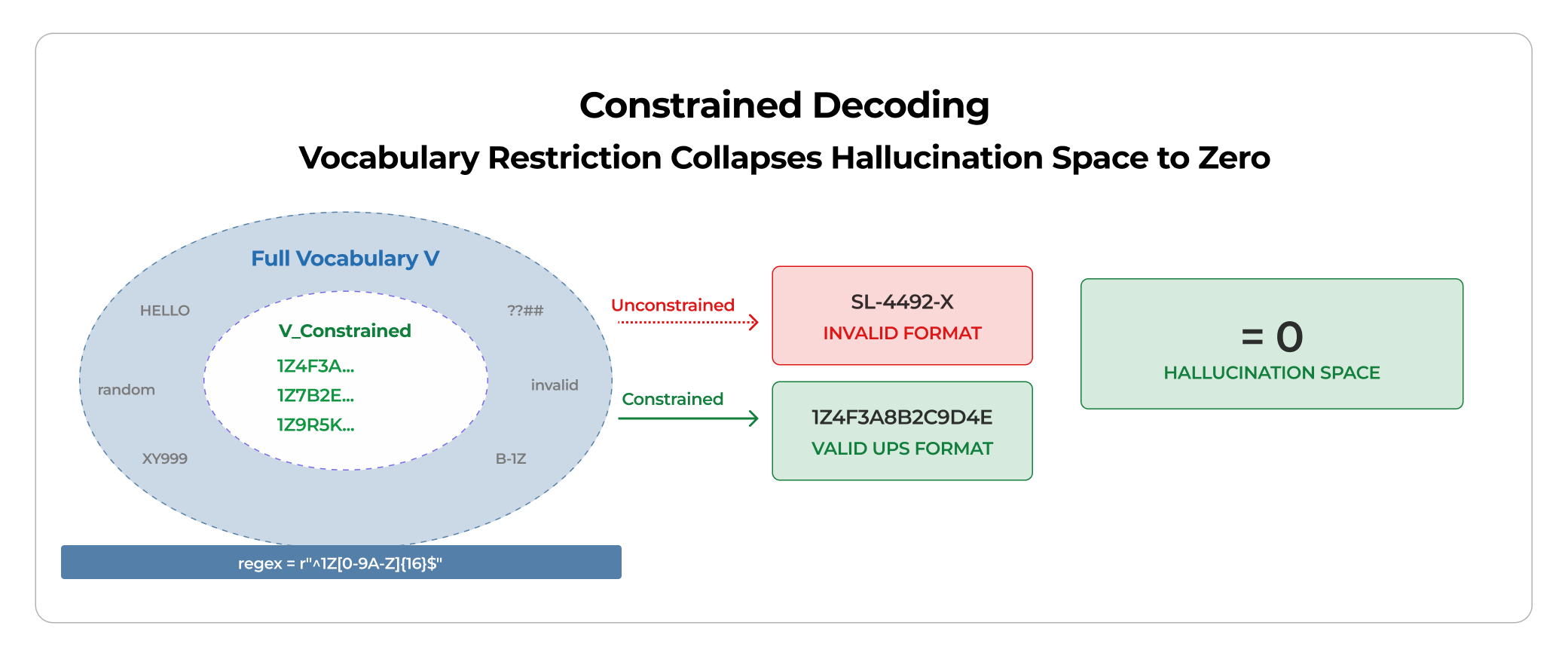

Constrained Decoding — Hallucination Space Collapses:

Hard constraint: only valid UPS tracking formats (regex: ^1Z[0-9A-Z]{16}$). Constrained vocabulary at decode time means only grammar-compliant tokens are allowed. The probability mass is redistributed only over the constrained set. The model cannot output a non-compliant tracking number. When the model can only output tokens that comply with the validated grammar, the hallucination space collapses to zero for non-compliant outputs.

04- Boundary Execution: Governance at the Gate

The boundary is legal territory. When goods exit, liability transfers, insurance activates, customs triggers, and the SLA clock starts. If the AI validates incorrectly, the erroneous output becomes evidentiary data in any subsequent dispute.

Creative vs Deterministic Model at Gate Validation:

Creative Model: SealNumber = "SL-4492-X" — Interpolated. Wrong. Liability transferred on a fabricated seal.

Deterministic Model: SealNumber = null, Status = "REVIEW_REQUIRED". The truck waits. Operations may complain. Legal will thank you.

Entropy of Token Distribution:

H(P) = -sum P(ti) log P(ti). Lower temperatures lead to a sharper distribution, lower entropy, and lower operational risk. Logistics AI must minimize H(P) during decoding. Not because creativity is bad. Because variability is systemic risk.

The Deterministic Stack Architecture

True hallucination mitigation is not a single configuration parameter. It is a layered architecture in which each layer closes off a different attack surface for stochastic error.

1. Decoding Discipline: T = 0, top_k = 1, no nucleus sampling, greedy or beam search only.

2. Constrained Grammar: JSON schema enforced, field-specific token restrictions, regex-validated IDs, vocabulary restricted to compliant tokens.

3. Confidence Gating: If visual_confidence < threshold, abstain. UNSURE is a valid and correct response. No output below threshold.

4. Cross-System Validation: ERP lookup, ASN reconciliation, weight tolerance check. Output validated before commit.

5. Two-Pass Verification: Pass 1 = Extraction. Pass 2 = Logical validation. Neither pass alone is sufficient.

The Doctrine

Physical AI is not "Describe what you see." Physical AI is "Validate what exists."

The difference defines: Inventory accuracy, labor efficiency, chargeback reduction, and enterprise trust.

The mandate is: Execute. Verify. Validate. Abstain.

Start at Receiving. Control entropy. Enforce determinism. Propagate truth through Internal Execution, Dispatch, and Boundary. Randomness belongs in research labs. Determinism belongs on the dock floor.

When Physical AI behaves like infrastructure rather than a chatbot, it becomes trusted. Not impressive. Trusted.

That is the real competitive advantage.

%20Management_.webp)