The most important conversation in enterprise technology right now is not about what AI can do. It is about what happens when AI gets it wrong in an environment that cannot afford to wait for a correction.

Leaders across logistics, manufacturing, and supply chain are no longer asking whether AI works. They are asking whether it is trustworthy enough to operate without a human catching its mistakes before those mistakes become operational reality. That distinction is reshaping how the most serious teams in this industry think about AI deployment, and it starts with understanding that not all AI is built for the same world.

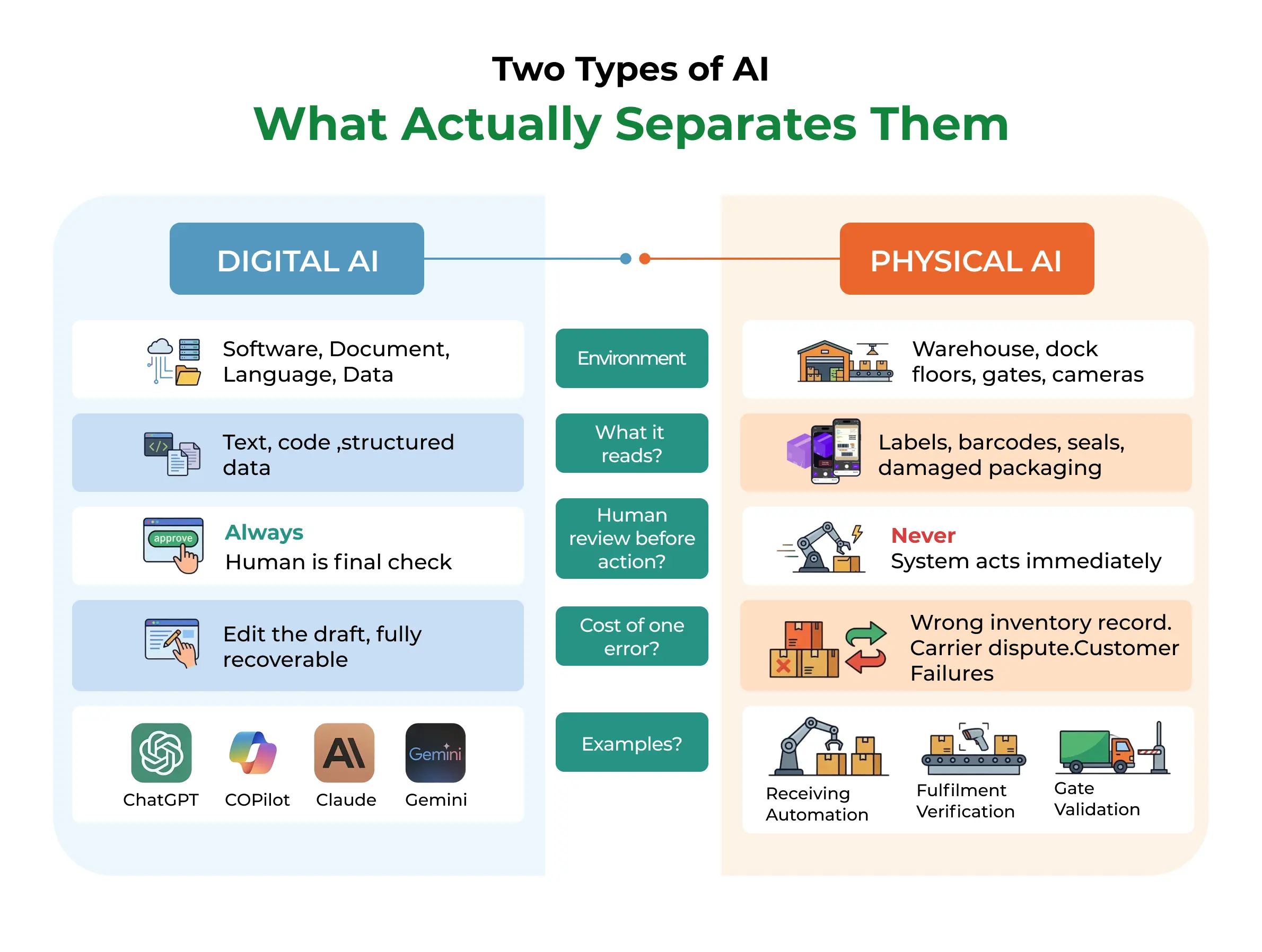

There are two kinds of AI operating right now. Most people only know one of them.

Digital AI: The AI You Already Know

Digital AI lives entirely in software. It reads, writes, summarizes, generates, translates, and reasons within the digital realm. When you ask a language model to draft an email, analyze a contract, or autocomplete your code, that is Digital AI at work.

The stakes in Digital AI are real but recoverable. If it writes a flawed summary, you rewrite it. If it generates a bad code snippet, you debug it. If the email misses the tone, you edit it before you send. There is always a human in the loop, and that human is the final check before anything consequential happens.

This is the AI that has captured the world's imagination. The demos are impressive. The reasoning, at times, is startling. And because the cost of a mistake is manageable, we have collectively made peace with the fact that it is not always right.

Physical AI: The AI You Need to Understand

Physical AI operates at the intersection of the digital and the physical world. It uses cameras, sensors, and vision models to read what exists in reality and then translate that reality into records that enterprise systems can act on immediately. It reads a shipping label on a damaged box as it moves through a fulfillment center. It validates a seal number on a truck at a gate. It confirms whether the SKU in a worker's hand matches the order being picked.

When Physical AI reads a label, it is not drafting a sentence for a human to review. It is updating a Warehouse Management System. It is triggering a replenishment order. It is transferring liability. It is releasing a shipment worth hundreds of thousands of dollars. There is no human re-reading the output before the action fires.

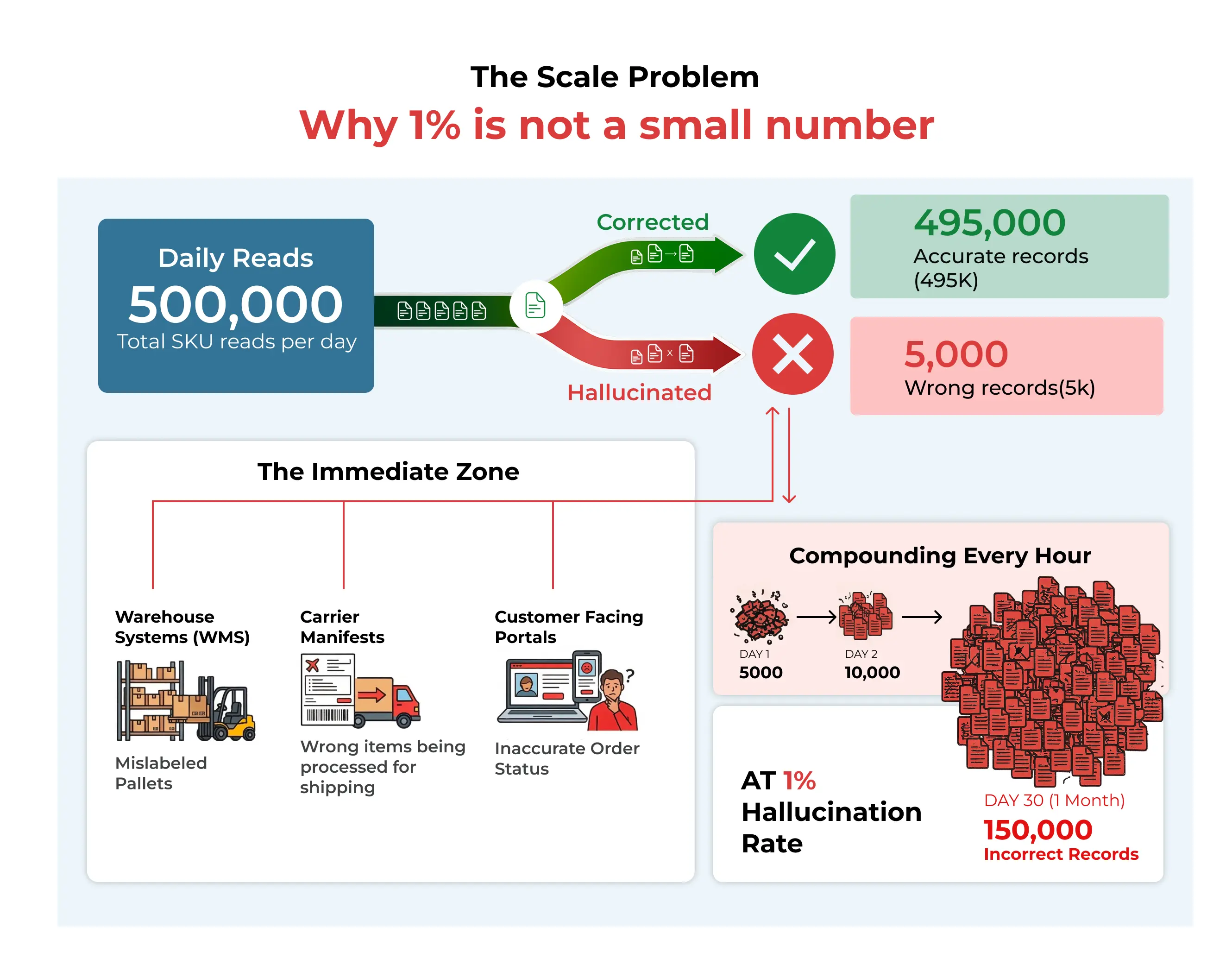

A fulfillment center processing five thousand orders a day is not asking AI to be creative. It is asking AI to be right. Every time. Without exception. And when it is not right, the error does not sit in a draft folder waiting for review. It moves downstream at operational speed, directly into inventory records, carrier manifests, customer commitments, and compliance documents.

The Problem Both Share: Hallucination

Here is where the story gets uncomfortable, because both Digital AI and Physical AI share the same fundamental flaw. They hallucinate.

Hallucination is the technical term for when an AI model produces output that is confident, plausible-sounding, and wrong.

In Digital AI, you have likely experienced this already. You ask a language model to summarize a long document, and it gets the early sections right, then somewhere in the middle, it starts describing things that were never in the text. You ask a question deep in a long conversation, and it forgets the context it acknowledged twenty messages ago. You ask it to cite a source, and it invents one with a real-sounding author, a credible journal name, and a year that checks out, except the paper does not exist.

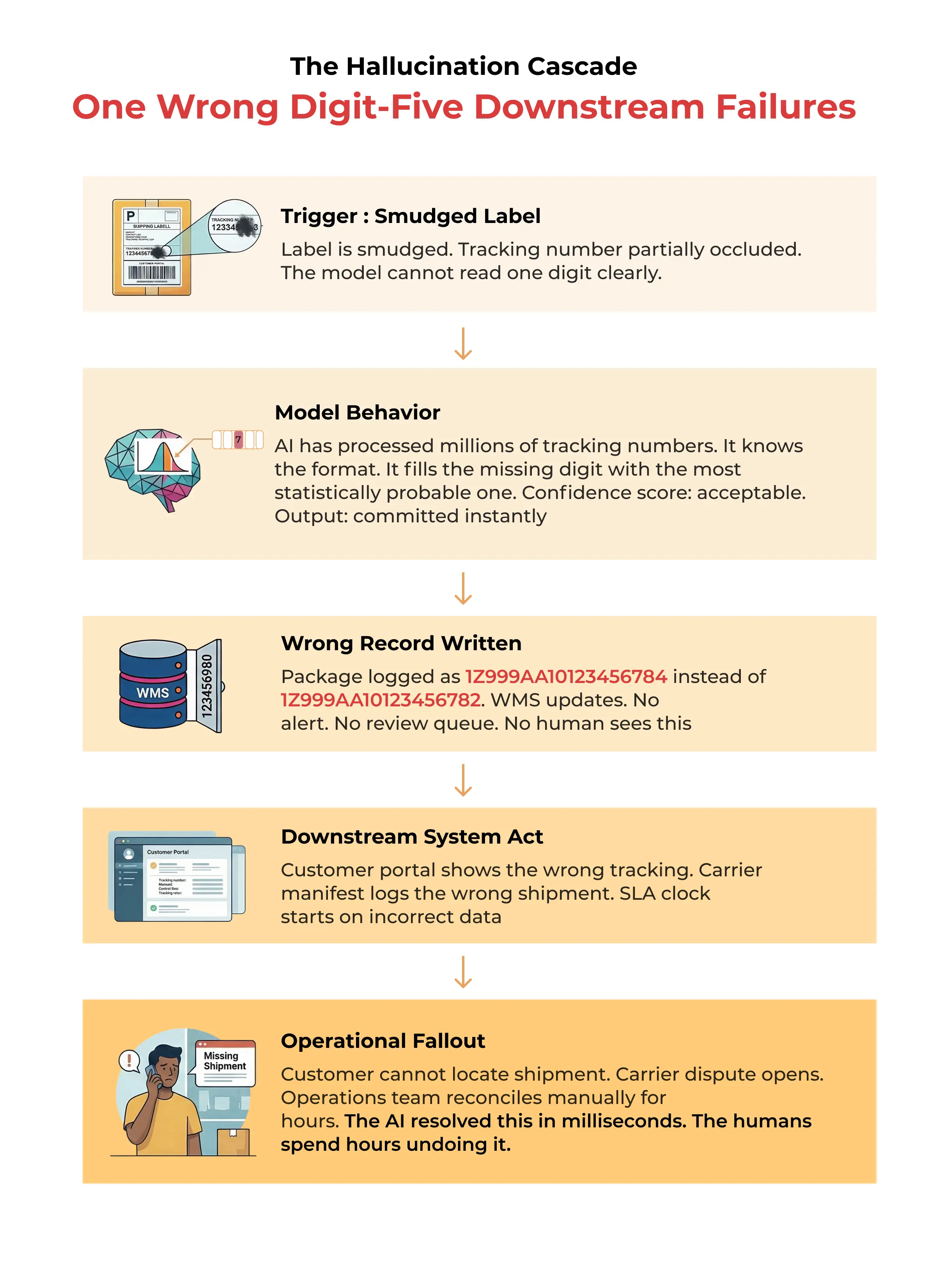

The model is not lying. It is doing exactly what it was built to do: predict the most statistically plausible next output given everything it has seen. When context gets stretched, when input is ambiguous, when information is incomplete, it fills the gap with what seems most likely. And it does so with complete confidence.

This is tolerable in a chatbot. You fact-check. You reread. You push back. Now place that same tendency inside a Physical AI system, reading a partially torn barcode at three in the morning in a distribution center.

Why This Is the Conversation the Industry Needs to Have

The enterprise AI conversation has been dominated by capability. What can it do? How fast? What does the demo look like? These are not the wrong questions. They are simply incomplete ones.

The question that separates AI that impresses from AI that earns trust is this: what happens when the input is not clean? When the label is torn, the light is poor, the font is degraded, and the package is moving. Does the system make a confident guess? Or does it know what it does not know?

In every physical environment where AI operates, that question determines whether the technology helps the business or quietly works against it. The answer is not just about model accuracy. It is about system architecture, decoding discipline, and the engineering philosophy embedded in how the AI was built from the ground up.

The most demanding logistics operators are no longer asking whether AI works in a controlled environment. They are asking whether it is engineered for the conditions that actually exist on the dock floor, in the fulfillment center, and at the carrier gate.

.webp)

%20Management_.webp)